No products in the cart.

/

Open Source · Apache-2.0

NeoMind

Edge LLM Agent for IoT

A Rust-based edge AI platform that enables autonomous device management and automated decision-making through Large Language Models.

...

Latest Release

9+

LLM Backends

3

Platforms

What is NeoMind

Edge AI Platform for IoT Automation

NeoMind brings the power of Large Language Models to edge devices, enabling intelligent automation through natural language interaction.

Natural Language Control

Interact with your IoT infrastructure using plain language. Query device status, create automation rules, and execute commands through conversational AI.

Run LLMs at the Edge

Deploy AI models directly on edge devices with Ollama. No cloud dependency, full data privacy, and offline operation capability.

Intelligent Automation

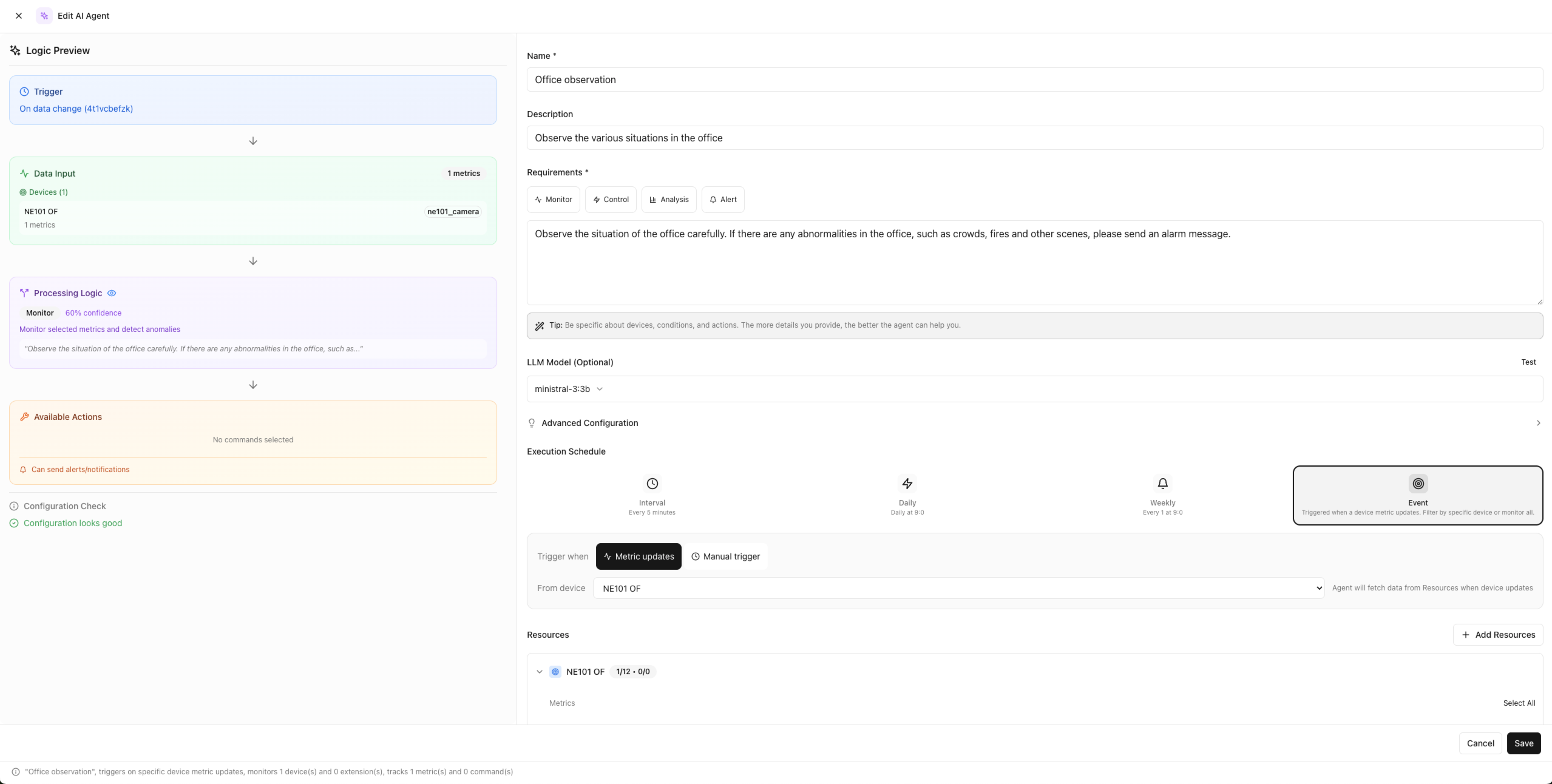

Create automation rules through natural language descriptions. The system understands your intent and generates executable automation logic.

AI-Powered Extensibility

Build custom extensions with AI assistance. Our Claude skill helps you quickly develop Native or WASM extensions. The unified type system makes extension development intuitive and powerful.

LLM as System Brain

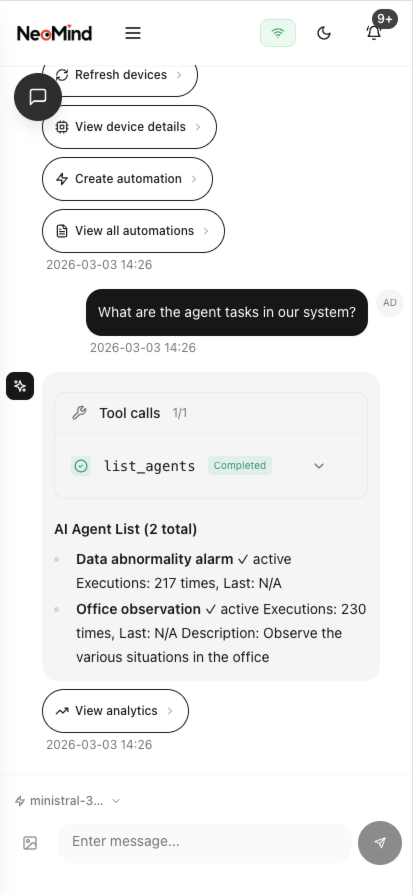

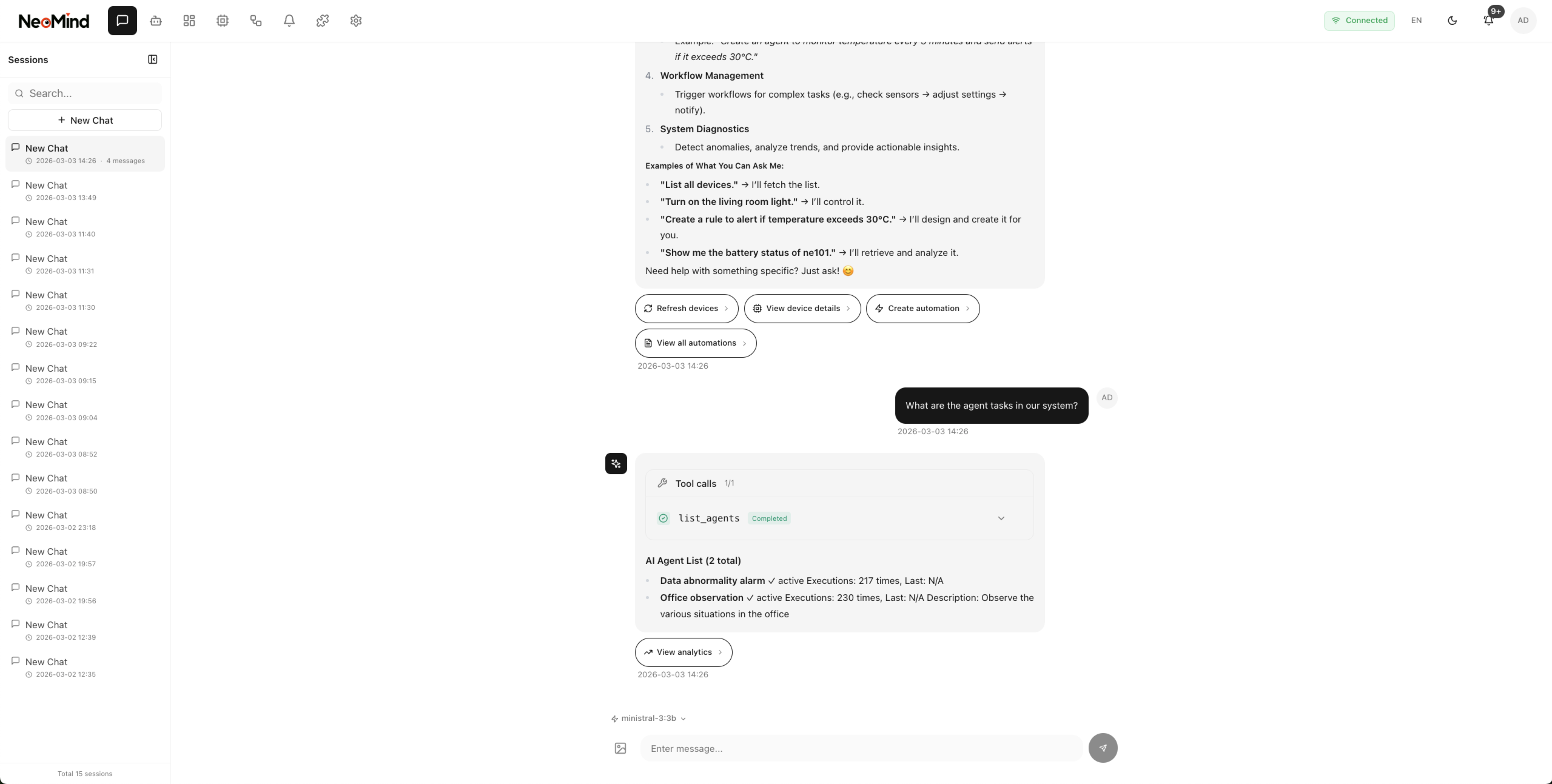

Natural Language Interface

Interactive chat for querying and controlling edge devices through natural language.

Interface

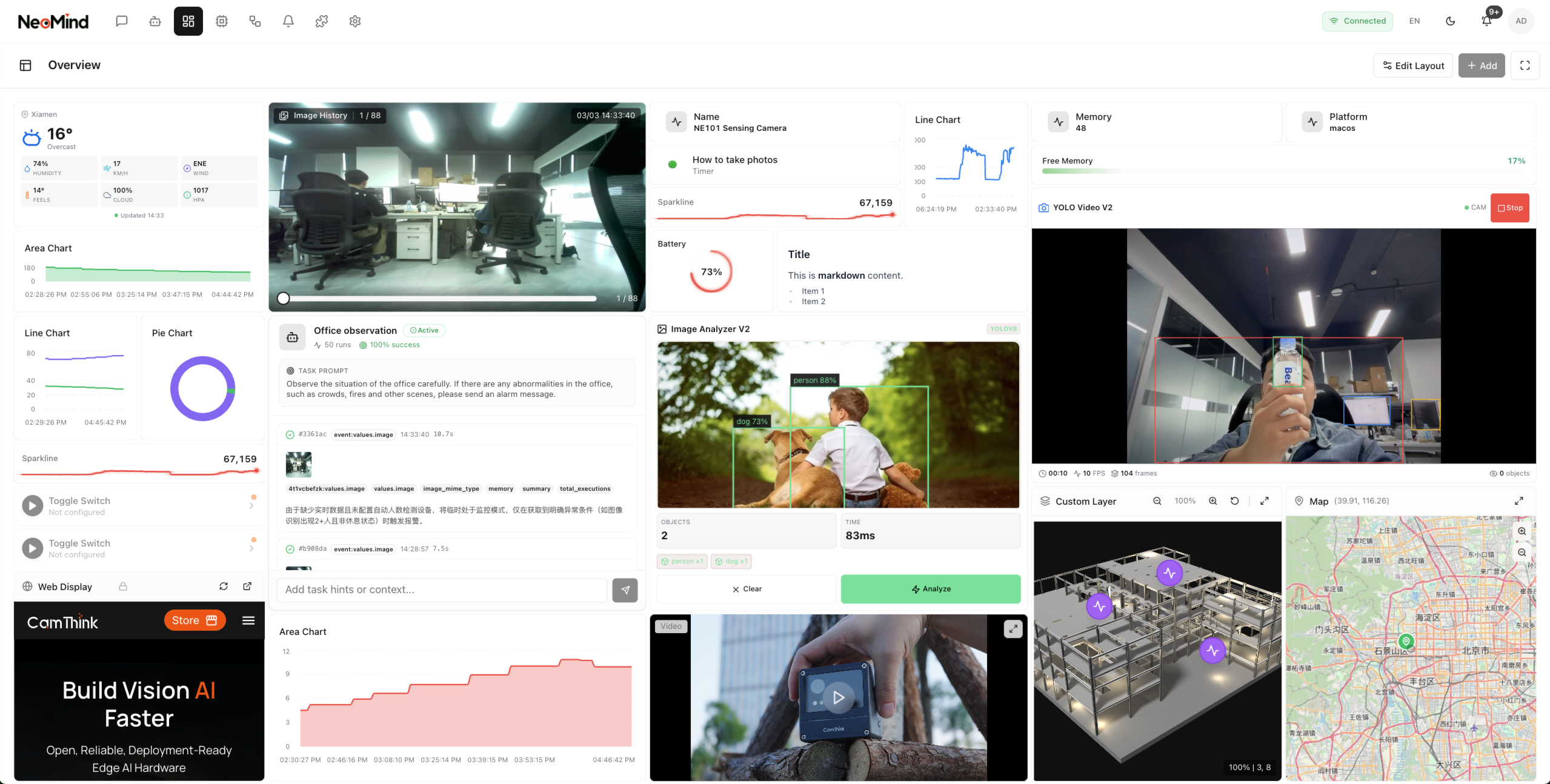

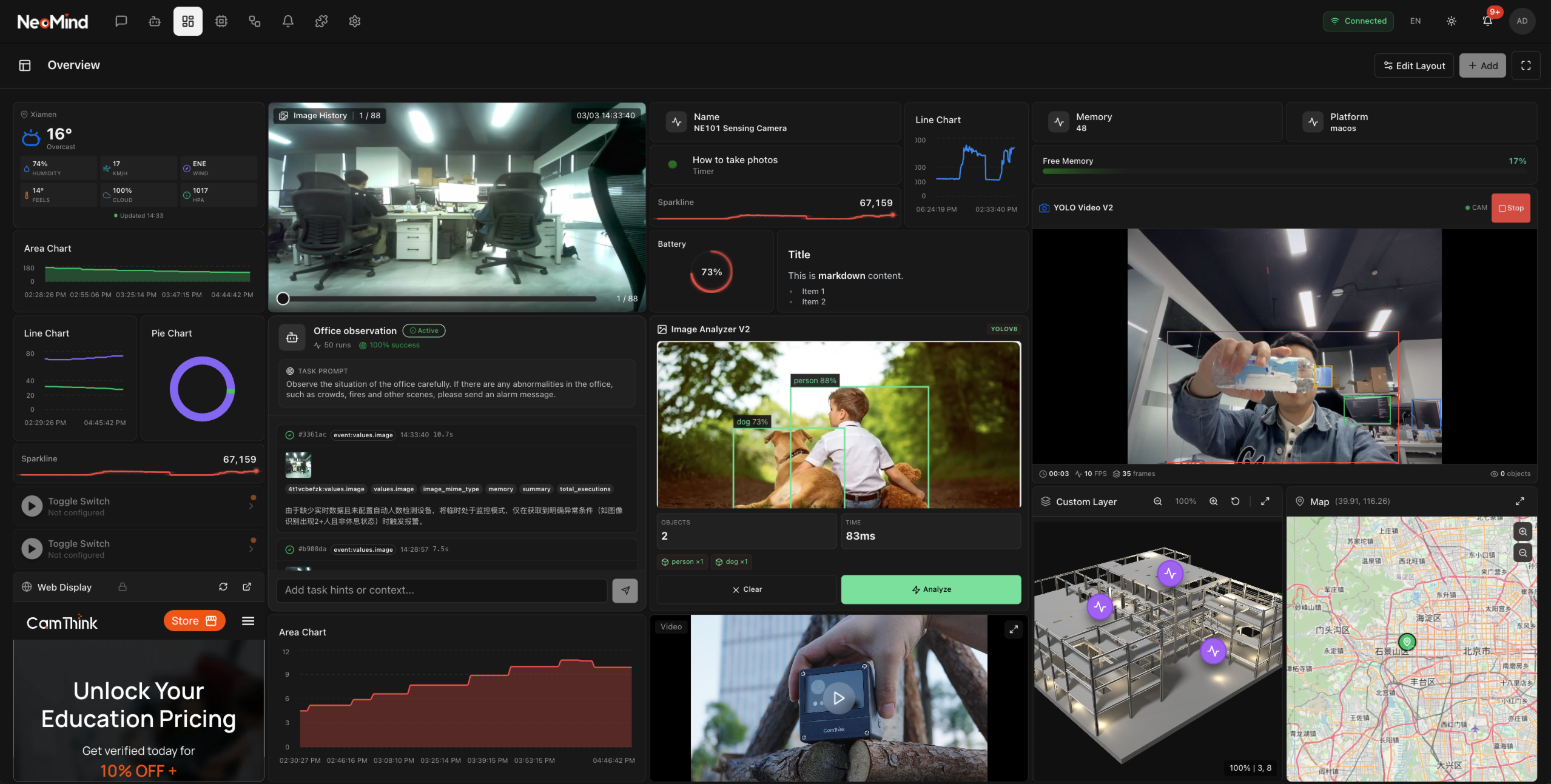

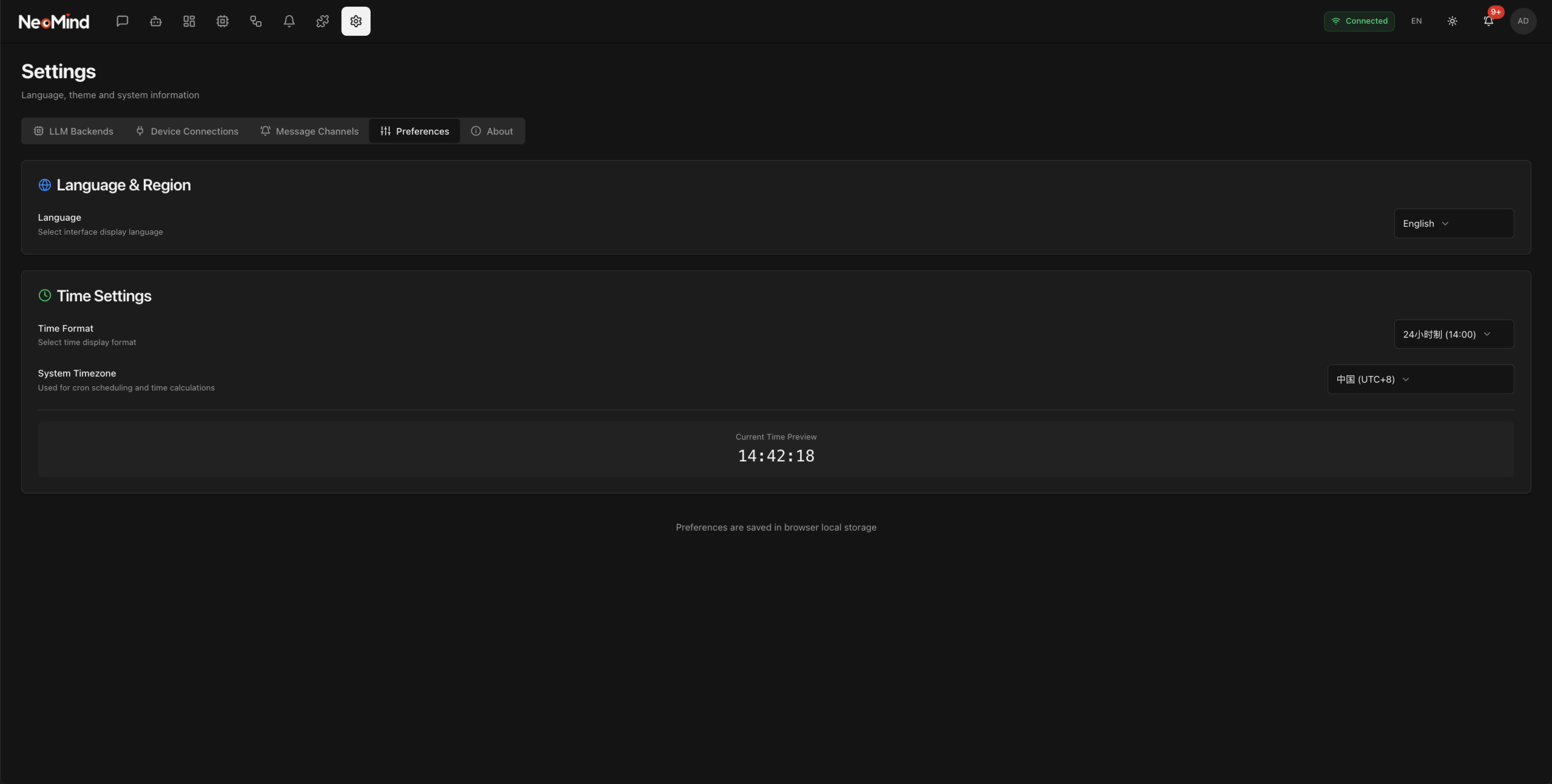

Platform Overview

Modern, responsive interface for desktop and mobile. Dark and light themes supported.

Core Features

Key Capabilities

Built with a modular, event-driven architecture for reliable IoT automation.

LLM as System Brain

Interactive chat interface for querying and controlling devices. AI agents with tool calling capabilities. Execute real system actions through LLM function calling.

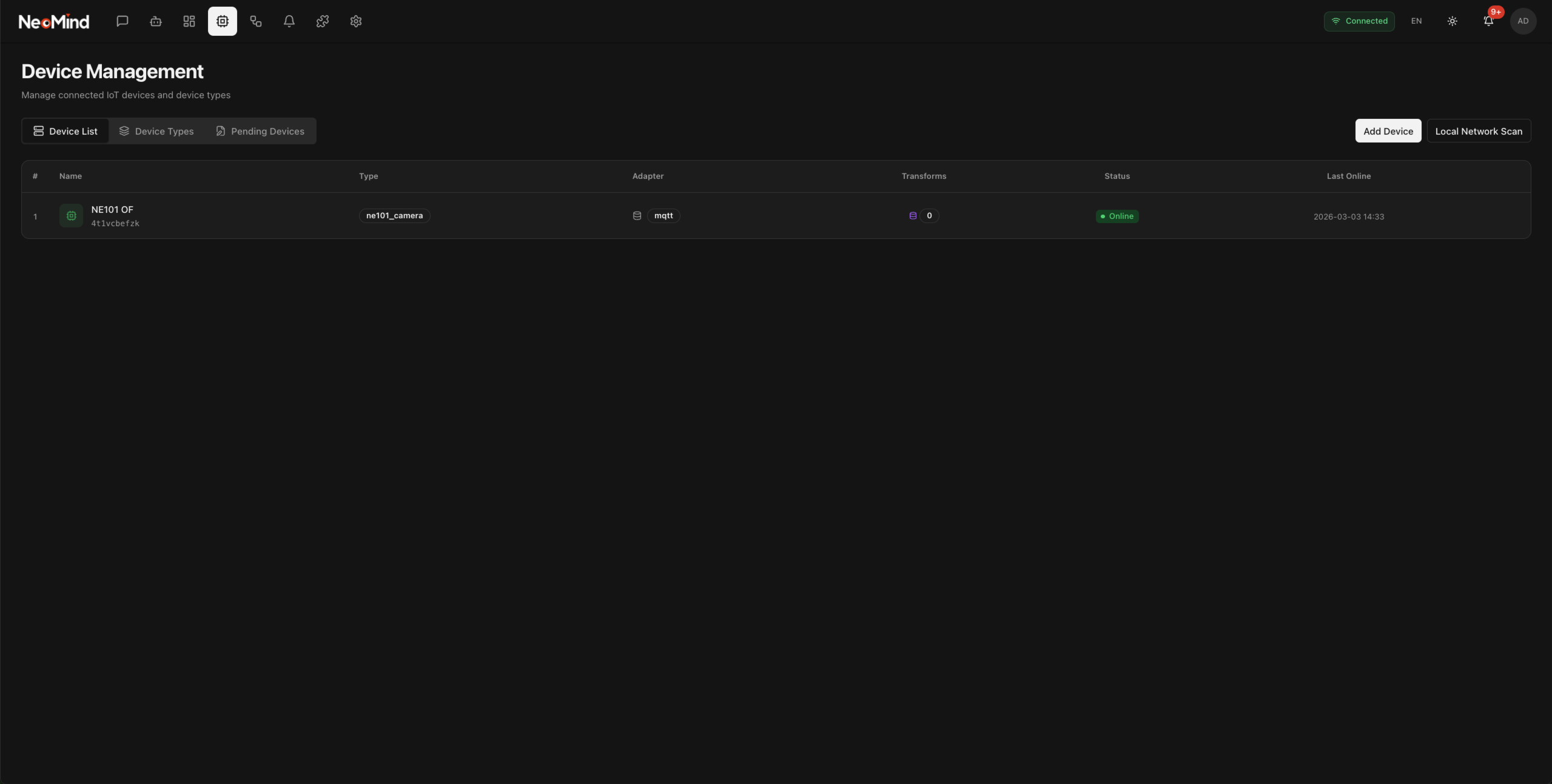

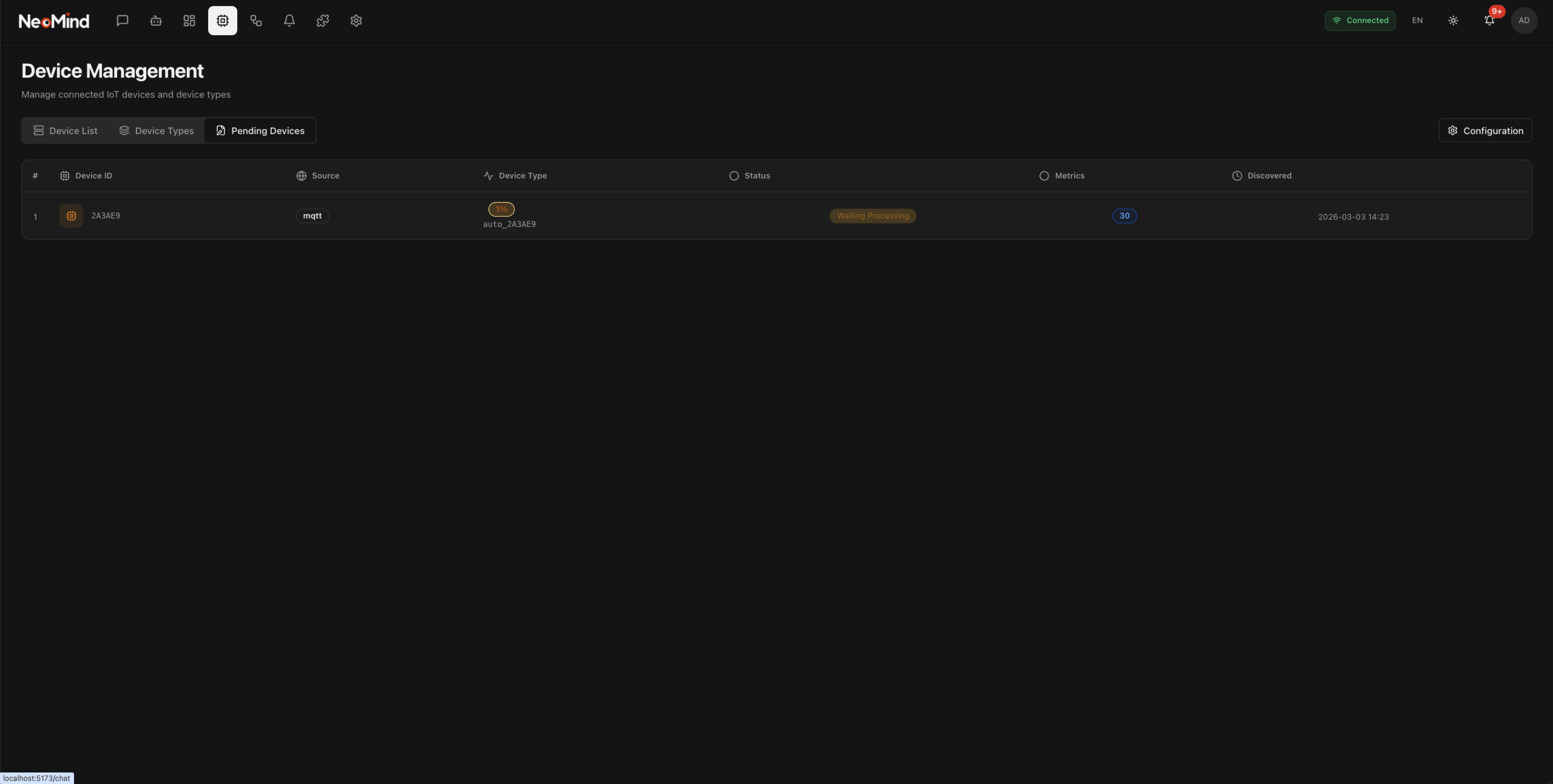

Modular Device Integration

MQTT protocol with embedded broker. Automatic device detection and type registration. HTTP/Webhook adapters for flexible integration. AI-assisted device onboarding.

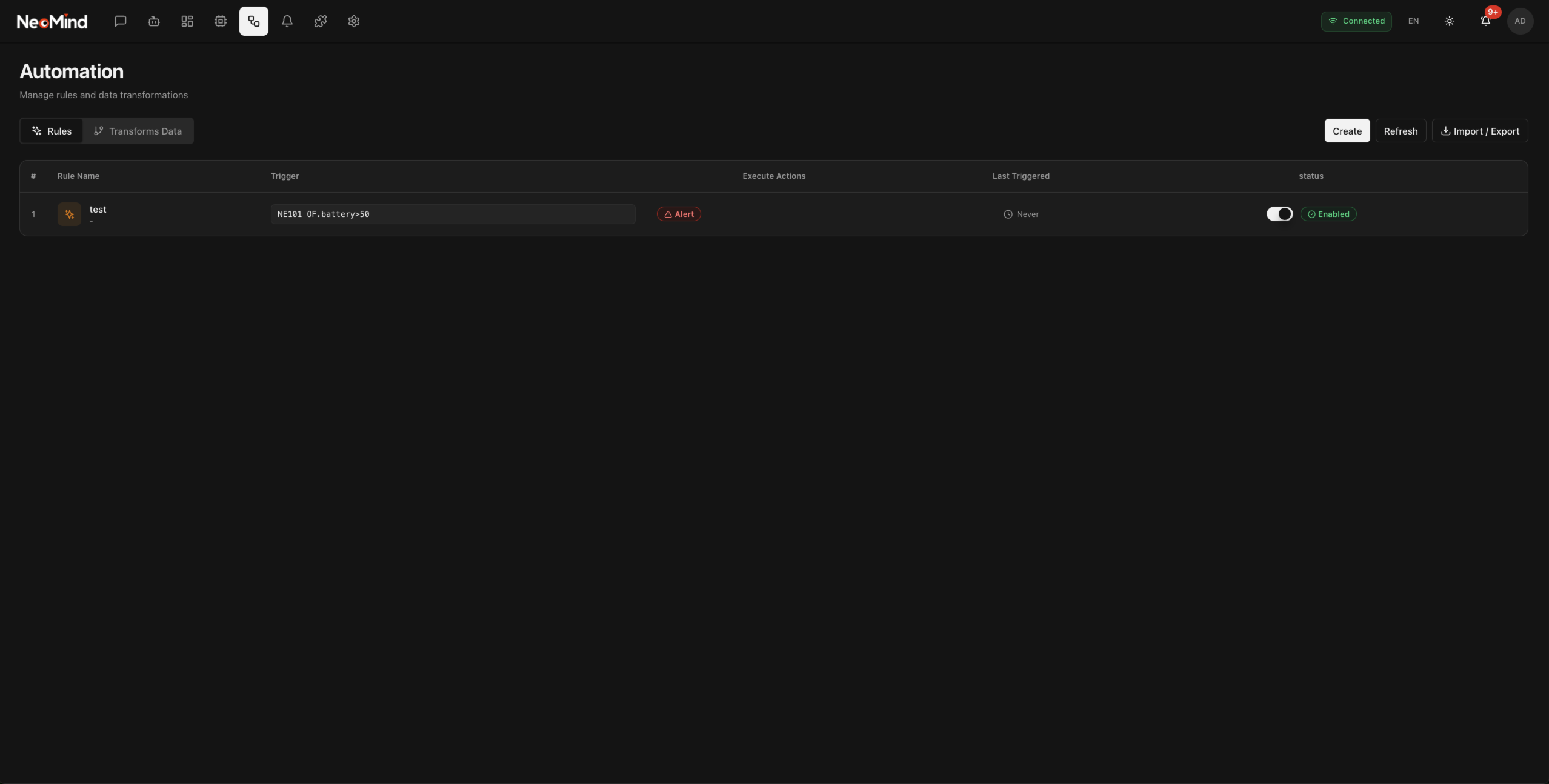

Event-Driven Architecture

Real-time response - device changes automatically trigger rules and automations. Decoupled design via event bus. Multiple transports: REST API, WebSocket, SSE.

Complete Storage System

Time-series storage with redb. Device states and automation execution records. Three-tier LLM memory (short/mid/long-term). Vector search across devices and rules.

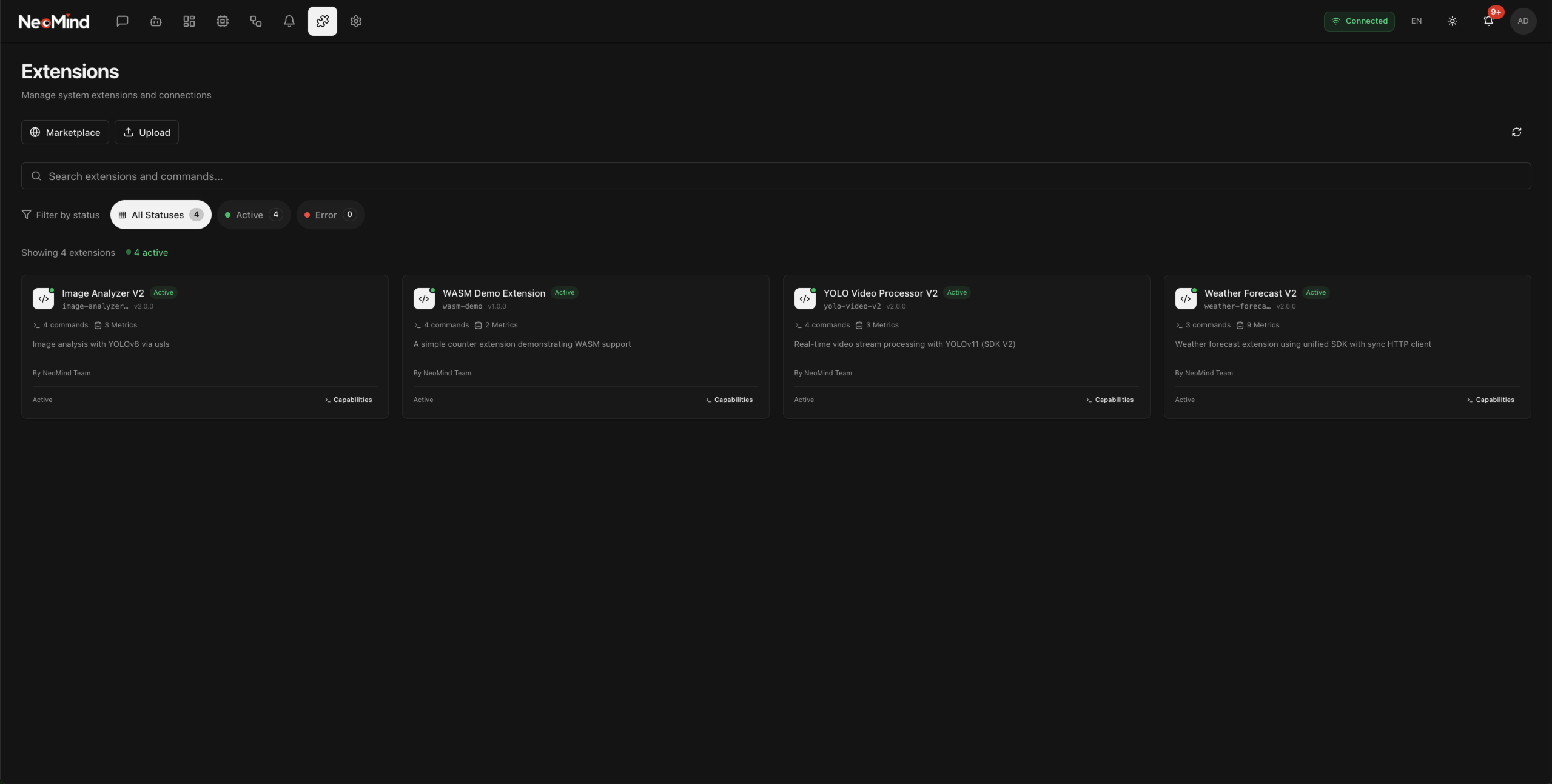

Unified Extension System (V2)

Dynamic loading at runtime. Support for Native (.so/.dylib/.dll) and WASM extensions. Extensions use same type system as devices. Sandboxed execution environment.

Desktop Application

Cross-platform: macOS, Windows, Linux native apps. Modern UI with React 18 + TypeScript + Tailwind CSS. System tray for background operation. Built-in auto-update.

Architecture

System Architecture

Event-driven architecture with unified event bus for decoupled component communication.

Desktop / Web UI

React 18 + TypeScript Tailwind CSS + Radix UI Tauri 2.x Desktop

API Gateway

REST API WebSocket SSE OpenAPI Docs

Event Bus

Central message broker for decoupled communication

LLM Agent

Chat Interface Tool Calling Memory System

Devices

MQTT Broker HTTP/Webhook Auto Onboarding

Automation

Rules Engine Data Transforms DSL Parser

Extensions

Native Libraries WASM Modules Sandbox

Storage Layer

Time-Series (redb) State Storage Vector Search LLM Memory

Core Concepts

Unified Type System

Devices and extensions share the same type system using Metrics and Commands.

Metrics

Time-series data points representing sensor readings, state values, or computed measurements.

Example Metrics

Float

Integer

Boolean

String

JSON

Commands

Actions that can be executed on devices or extensions. Supports typed parameters with validation.

Example Commands

{ "power": true } Boolean Parameter{ "value": 25.5 } Float ParameterExtensions

Pluggable modules that extend platform capabilities. Use the same metrics/commands system as devices.

Extension Types

Define custom metrics

Expose commands

Poll data sources

Provide dashboard cards

Sandboxed execution

Features

Built-in Features

Comprehensive tools for monitoring, automation, and data processing.

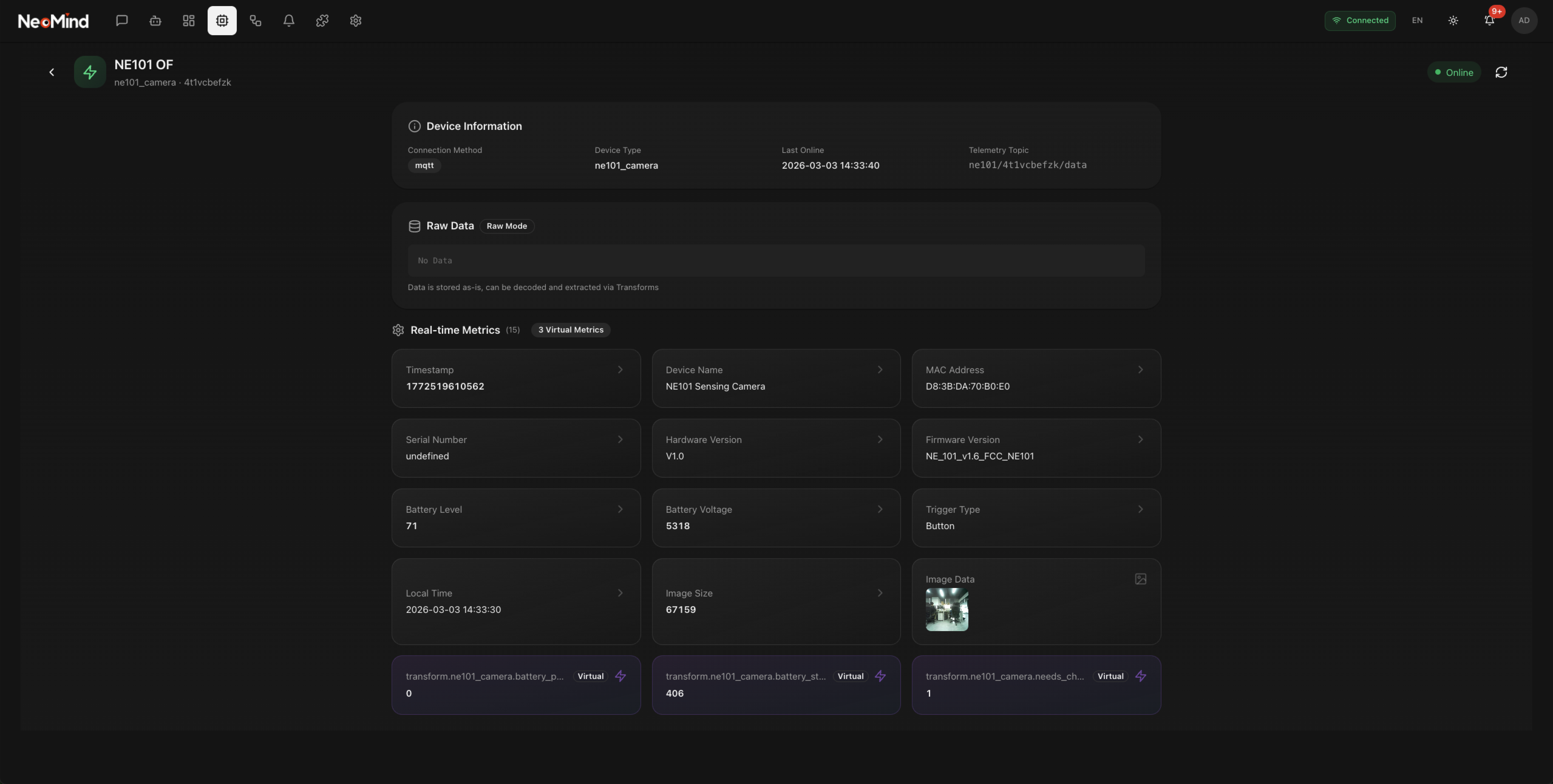

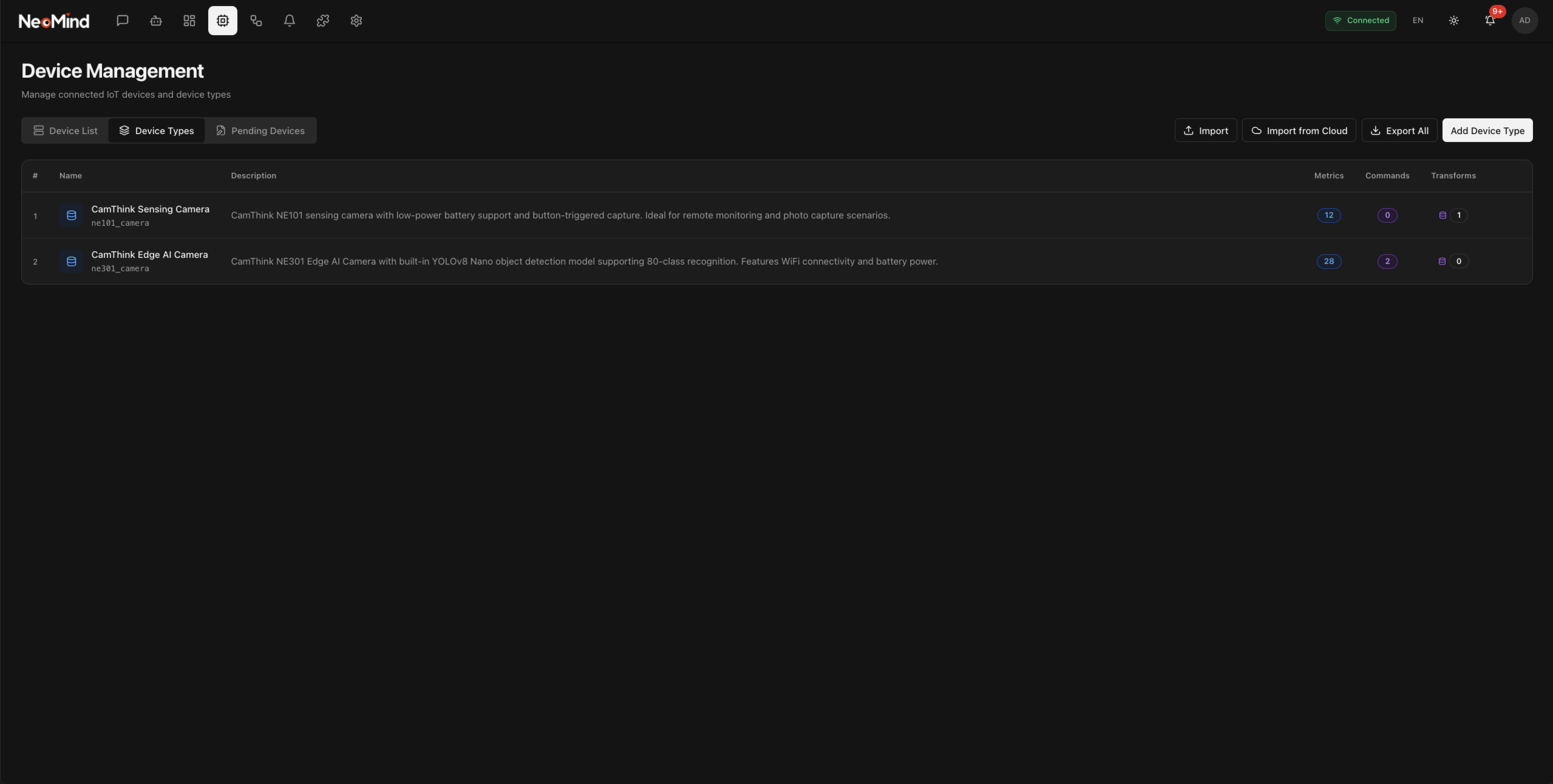

Device Management

Complete device lifecycle management with automatic discovery.

1Discover

2Onboard

3Monitor

MQTT / HTTP / Webhook Auto Discovery Real-time Monitoring Command Retry

Dashboard

Real-time visualization with customizable widgets and drag-drop layout.

Drag & Drop Real-time Updates Multiple Dashboards Template Library

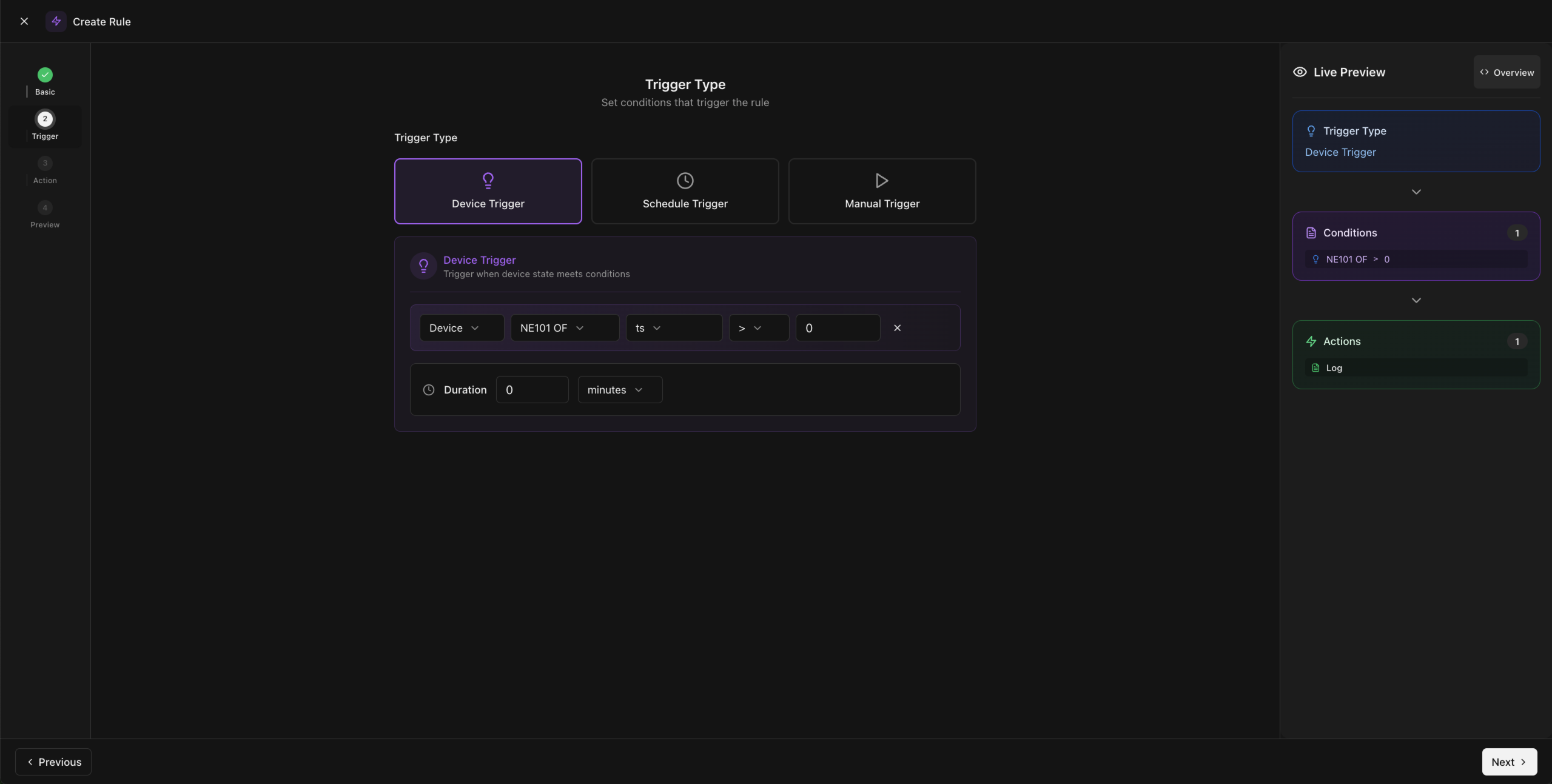

Rules Engine

Event-driven automation with DSL and natural language creation.

RULE "Alert" WHEN device.cpu > 85 FOR 5m DO send_alert() ENDNatural Language → DSL Condition Triggers Duration Support Execution History

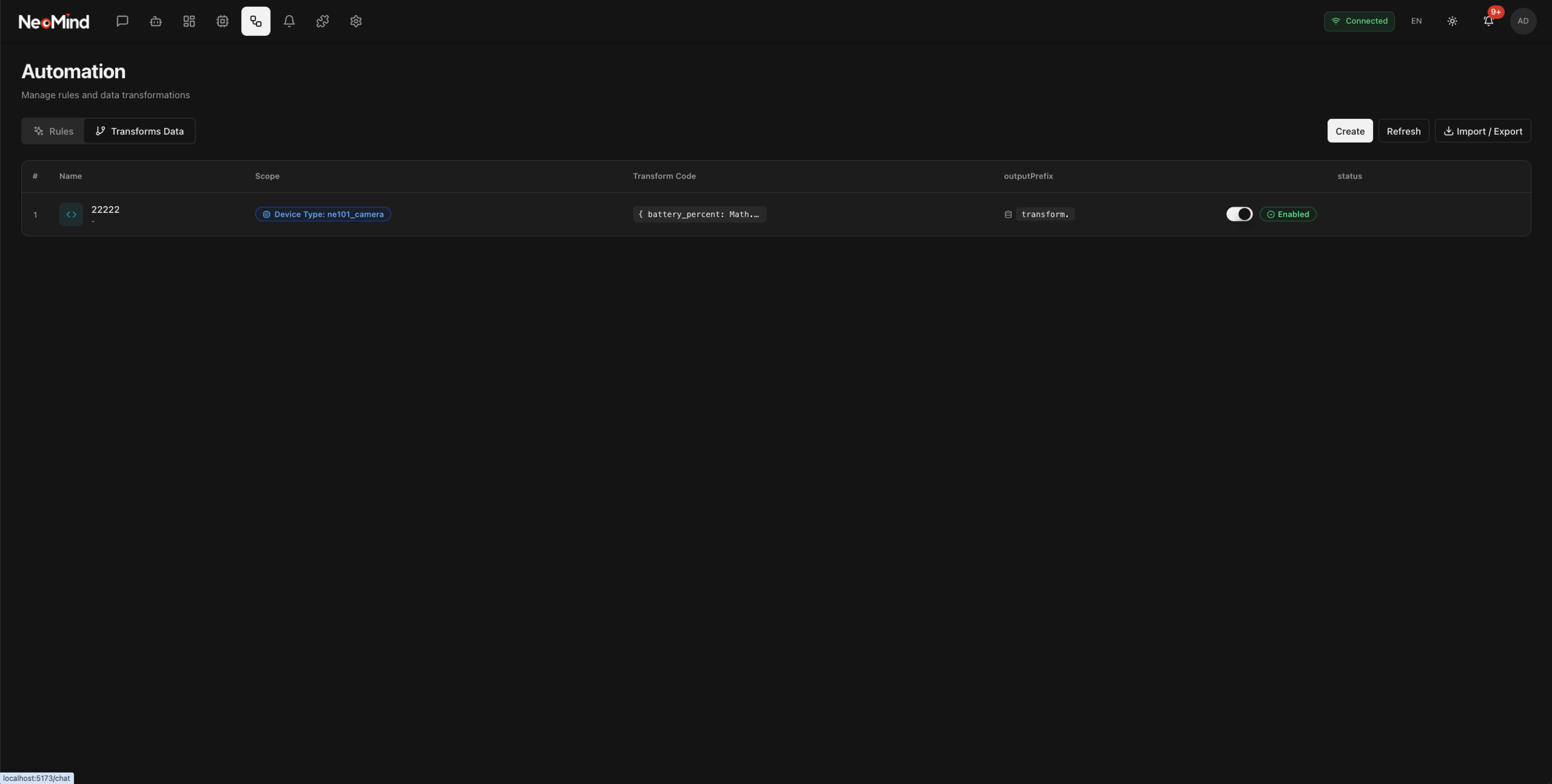

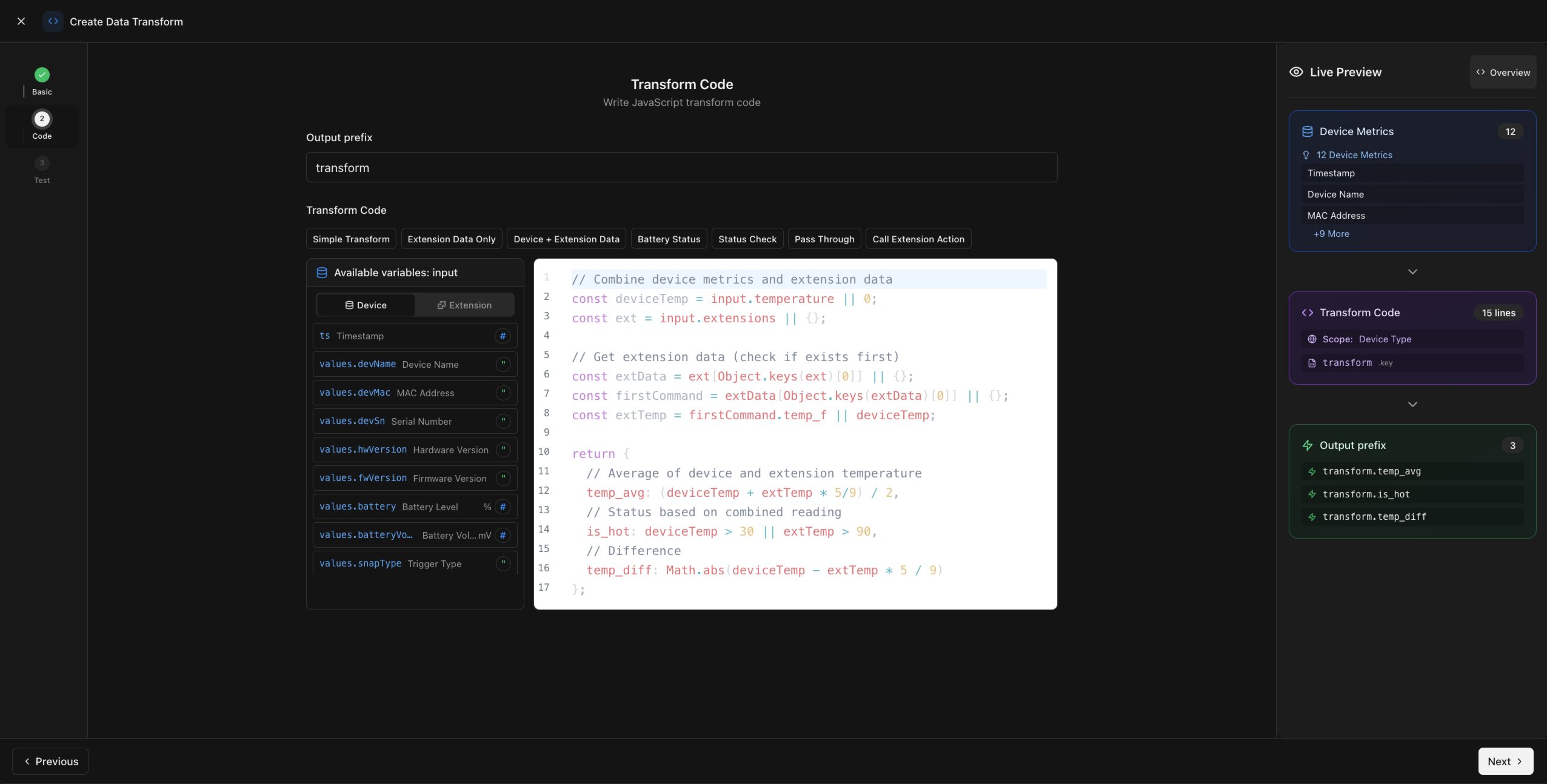

Data Transforms

Process and aggregate data from multiple sources into virtual metrics.

Sensor A + Sensor B + Sensor C Avg Temp

Aggregation Filtering Virtual Metrics Time Windows

Extensibility

AI-Powered Extension Development

Build custom extensions with AI assistance. Our Claude Skill helps you quickly and efficiently develop native or WASM extensions.

my_extension.rs Extension SDK

use neomind_extension_sdk::prelude::*;

struct WeatherExtension;

declare_extension!(

WeatherExtension,

metadata: ExtensionMetadata {

name: "weather.sensor".into(),

version: "1.0.0".into(),

description: "Weather data".into(),

..

},

);

impl Extension for WeatherExtension {

fn metrics(&self) -> &[MetricDefinition] {

&[MetricDefinition {

name: "temperature".into(),

data_type: MetricDataType::Float,

unit: "°C".into(),

..

}]

}

}AI-Assisted Development

Use our Claude Skill to generate extension code. Just describe your needs in natural language.

Native & WASM Support

Build extensions as native libraries (.so/.dylib/.dll) or WebAssembly modules.

Unified Type System

Extensions use the same type system as devices for seamless platform integration.

Dynamic Loading

Load and unload extensions at runtime without restarting the platform.

Sandboxed Execution

WASM extensions run in a secure sandbox with controlled resource access and memory limits.

Try the Claude Extension Skill

Type /neomind-extension create a weather sensor extension in Claude Code to get started.

5-Minute Quick Start

Start Developing in Minutes

Get your first NeoMind extension running with Claude Code

What happens next:

Extension scaffold generated

Rust project structure with SDK pre-configured

Metrics & commands defined

Auto-generated based on your requirements

Ready to compile

Just run cargo build to get your .so file

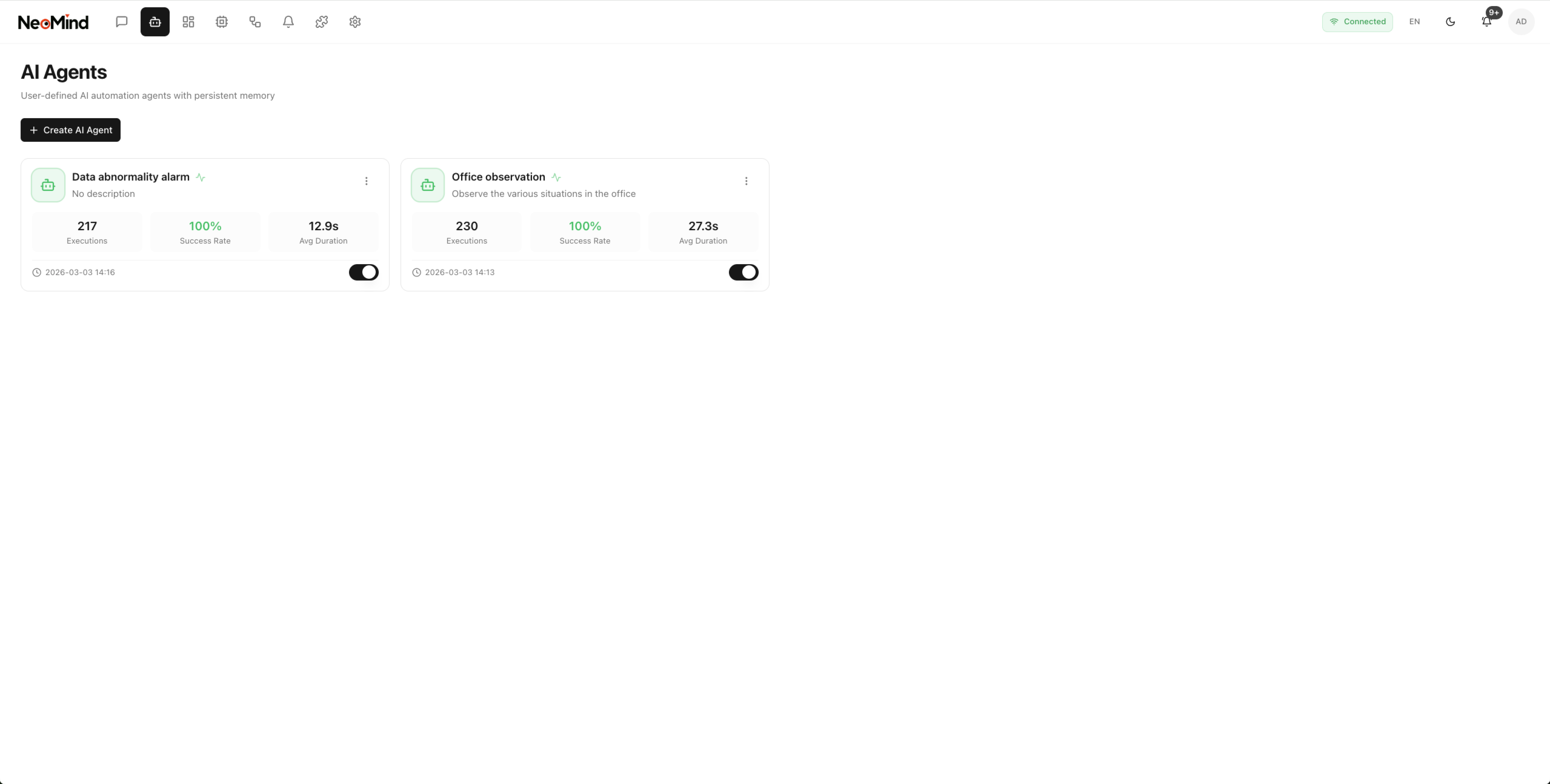

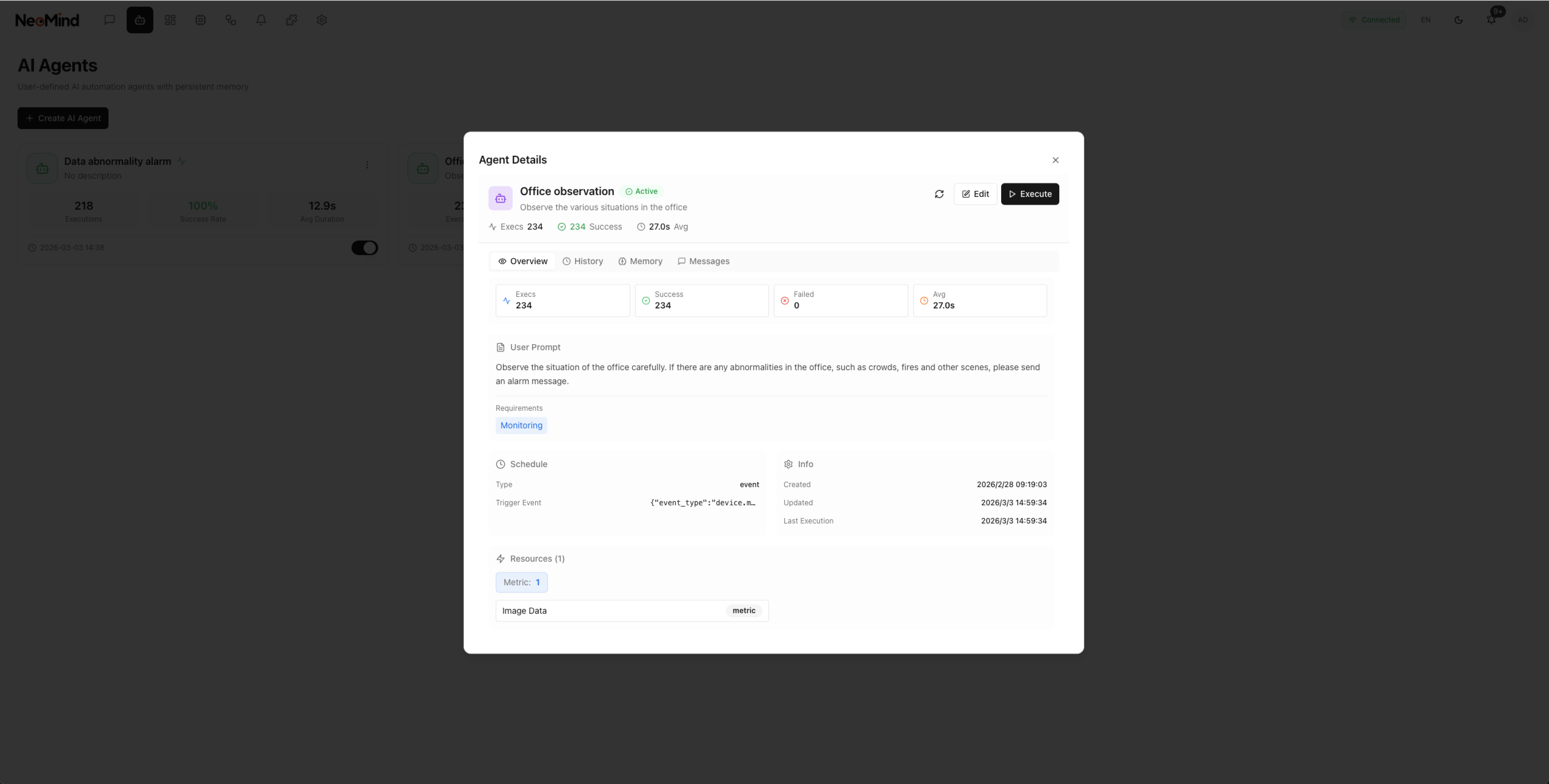

AI Core

LLM as System Brain

Intelligent automation through natural language with multimodal understanding, tool calling, and persistent memory.

NeoMind AI Agent Architecture

Data Sources & Entities

Devices

Metrics Commands States

Extensions

Native WASM Data

Automation

Rules Transforms Triggers

Input

Chat Vision API

Memory

Short-term Session Knowledge

LLM Agent

Intent Tools Reasoning

Tools

Device Query Rules

Output

Response Action Create

Built-in Tools & Actions

Device Tools

List Devices

Control Device

Query Data

Device Health

Automation Tools

Create Rules

List Rules

NL to Automation

Extension Tools

Extension Data

Extension Commands

Sync States

Metrics & States

Real-time Event Bus

Commands & Actions

Vision-First Design

Multimodal Understanding

NeoMind leverages vision-capable LLMs to understand images, charts, and visual data—perfect for industrial inspection, equipment monitoring, and visual anomaly detection.

Equipment Status Image Analysis

Visual Inspection & Anomaly Detection

Document & Chart Understanding

Video Frame Analysis Support

Recommended Vision Models

qwen3-vl:4b Balanced, 4B params

llama3.2-vision Edge, 11B params

gemma3:4b Edge, 4B params

ministral-3:3b Edge, 3B params

Supports 9+ LLM Backends

Run locally with Ollama or connect to cloud providers

Ollama Edge

OpenAI

Anthropic

Google

xAI

DeepSeek

Qwen

GLM

MiniMax

Custom

Use Cases

Application Scenarios

Built for edge AI developers and industrial IoT applications.

Industrial IoT

Monitor production lines, detect anomalies, and automate quality control with vision AI at the edge.

- Equipment Health Monitoring

- Visual Quality Inspection

- Predictive Maintenance

Smart Buildings

Intelligent building management with natural language control and automated energy optimization.

- Energy Management

- Occupancy Monitoring

- HVAC Automation

Smart Agriculture

Precision agriculture with environmental monitoring and automated irrigation systems.

- Soil & Climate Monitoring

- Crop Health Analysis

- Automated Irrigation

Logistics & Warehousing

Real-time inventory tracking, asset monitoring, and automated warehouse operations.

- Asset Tracking

- Inventory Management

- Environmental Control

For AI & IoT Developers

NeoMind provides a complete development platform for building edge AI applications. Start with our Extension SDK, leverage the Claude Skill for rapid development, and deploy to any edge device.

Extension SDK

Claude Skill

Open Source

Full Documentation

More Features

Built-in Capabilities

Embedded MQTT Broker

Zero-config embedded broker. Connect devices immediately without external infrastructure.

AI Auto-Onboarding

Upload samples and AI automatically generates device types, metrics, and commands.

WASM Sandbox

Sandboxed extensions at ~15KB. Secure, fast, and truly portable.

DSL Rules Engine

Write automation rules in plain English. AI translates intent into actions.

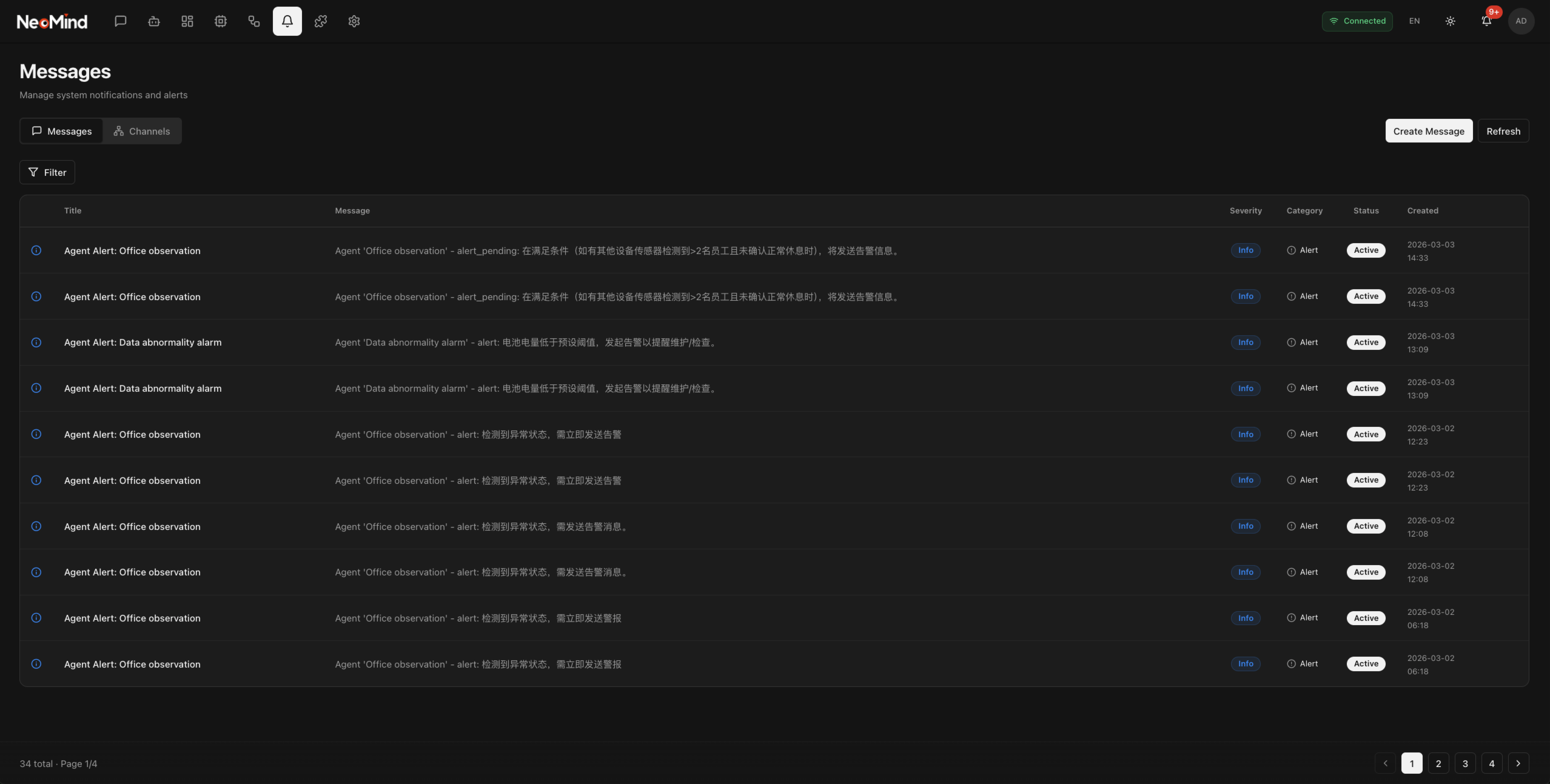

Smart Notifications

Multi-channel alerts with AI-powered summarization and priority routing.

Reliable Commands

Queue-based execution with automatic retry, timeout handling, and status tracking.

Full API Access

REST + WebSocket with interactive OpenAPI docs. Every feature is programmable.

Vector Search

Semantic search across devices, rules, and history. Find anything by meaning.

Tech Stack

Modern Technology

Rust 1.85+

React 18

TypeScript

Tauri 2.x

redb

Axum

Tokio

Tailwind CSS

Deployment

Choose Your Deployment

Multiple deployment options for different use cases.

Desktop App

End-user desktop application. Recommended for most users.

- macOS (DMG)

- Windows (MSI/EXE)

- Linux (AppImage/DEB)

- Includes installer wizard

Linux Server

Standalone server deployment for production environments.

- Single binary

- systemd service

- One-line install script

- Port 9375

Development

Build from source for development and customization.

- Requires Rust 1.85+

- Requires Node.js 20+

- Ollama recommended

- Fully customizable

Quick Start

Get Started in Minutes

Choose your preferred deployment method.

1

Desktop App

Download the latest release for your platform from GitHub Releases.

# Download from Releases page

https://github.com/camthink-ai/NeoMind/releases macOS .dmg Windows .msi Linux .deb

2

Linux Server

One-line install for server deployment with systemd service.

# One-line install

curl -fsSL https://raw.githubusercontent.com/camthink-ai/NeoMind/main/scripts/install.sh | bash systemd service Auto-start Port 9375

3

Development

Build from source with Rust and Node.js. Requires Ollama for local LLMs.

# Install Ollama and pull model

curl -fsSL https://ollama.com/install.sh | sh

ollama pull qwen3-vl:2b

# Clone and run

git clone https://github.com/camthink-ai/NeoMind.git

cd NeoMind && cargo run -p neomind Rust 1.85+ Node.js 20+ localhost:9375

Device Types

Supported Hardware

NeoMind includes built-in device type definitions for seamless integration.

Recommended

CamThink Edge AI Camera

ne301_camera

Edge AI camera with built-in YOLOv8 Nano object detection, supporting 80 classes. WiFi connectivity and battery powered.

Battery Percentage

Detection Count

Inference Time (ms)

Image Data

Capture Sleep

CamThink Sensing Camera

ne101_camera

Low-power sensing camera with battery operation and button-triggered capture. Perfect for remote monitoring scenarios.

Battery

Battery Voltage

Image Size

Need a Custom Device Type?

Create your own device type definitions or contribute to our growing library.

Edge Deployment

Run NeoMind at the Edge

Deploy AI-powered automation on industrial-grade edge hardware. Fully offline, low latency, complete data sovereignty.

Camera

Sensor

PLC

Gateway

Actuator

Extensions

MQTT

Cloud LLM Optional

Local LLM

NeoMind LLM + Automation

Fully Offline

LLM inference, MQTT broker, automation—all run on edge. No cloud required.

Sub-100ms Latency

Real-time response for critical industrial automation scenarios.

Data Sovereignty

All data stays on-premise. Meet strict compliance requirements.

Quick Deploy

JetPack 6.0+ with CUDA, TensorRT, Ollama pre-configured.

Recommended

NVIDIA Jetson

Orin NX 16GB

157 TOPS AI compute. Run qwen3-vl:2b locally with full NeoMind stack.

- 1024 CUDA + 32 Tensor cores

- 16GB LPDDR5 for LLM

- -25~60°C industrial grade

x86 Industrial PC + GPU RTX 3060+ for multi-LLM

ARM Raspberry Pi 5 Cloud LLM only

Mac Apple Silicon Development & testing

Extensions

Extension Marketplace

Extend NeoMind with powerful community and official extensions.

Global city weather data and 3-day forecast. Supports temperature, humidity, wind speed, and cloud cover metrics.

Temp (°C) Humidity (%) Wind (kmph) Cloud (%)

Refresh Set City

Stateless streaming extension for single image processing. Supports JPEG/PNG/WebP formats and object detection.

Detection Count Bounding Boxes

Reset Stats

Stateful streaming extension for session-based video processing with YOLO real-time detection.

h264/h265 Sessions

Get Session Info

AssemblyScript WASM extension example. Fast compilation (~1s), small binary (~15KB), 90-95% native performance.

Counter Temperature Humidity

Increment Counter Reset Counter

Build Your Own Extension

Create custom extensions using the Rust SDK or WASM. AI-assisted development via Claude Skill.

FAQ

Frequently Asked Questions

Common questions about NeoMind and edge AI deployment.

NeoMind is an edge-deployed LLM agent platform for IoT automation. It enables autonomous device management and automated decision-making through Large Language Models. You can control devices, create automation rules, and query system status using natural language.

NeoMind supports multiple LLM backends including Ollama (recommended for local deployment), OpenAI, Anthropic Claude, Google AI, xAI (Grok), DeepSeek, Qwen, GLM, MiniMax, and custom OpenAI-compatible APIs. You can easily switch providers as needed.

NeoMind is built with Rust and designed to be lightweight. Basic requirements: macOS 10.15+, Windows 10+, or Linux (modern distributions). For running local LLMs via Ollama, you'll need sufficient RAM (8GB+ recommended), preferably with a GPU for better performance.

Devices can connect via multiple protocols: MQTT (embedded broker), HTTP REST API, and Webhooks. NeoMind includes an embedded MQTT broker so no external broker is needed. Auto-discovery and AI-assisted onboarding make device registration seamless.

The DSL (Domain Specific Language) Rules Engine allows you to write automation rules in a human-readable format. Example: RULE "Alert" WHEN device.cpu > 85 FOR 5m DO send_alert() END. You can also create rules using natural language, and the LLM will translate them to DSL syntax.

Extensions extend NeoMind's capabilities by adding custom metrics and commands. They can be built as native libraries (.so/.dylib/.dll) or WASM modules. Extensions use the same type system as devices and can provide dashboard cards. Use our Claude Skill for AI-assisted extension development.

Yes! Using Ollama as your LLM backend, NeoMind can run completely offline. This is perfect for edge deployments, secure environments, or scenarios where data privacy is critical. All processing happens locally on your hardware.

Yes! NeoMind is licensed under Apache-2.0. The source code is available on GitHub. We welcome community contributions for device types, extensions, and core features.

Get Started with NeoMind

Download NeoMind and start building edge AI applications with LLM-powered automation.