No products in the cart.

COMPARE · Integrator

Edge AI vs. Cloud-Based Vision System — Architecture, TCO, and Deployment Fit

You’ve committed to using Vision AI to solve a monitoring problem. At this stage, the decision is no longer

about the camera itself, but about where inference runs in your system.

In practice, this means choosing between two architectures: an IP camera feeding a cloud-based vision pipeline,

or an on-device edge AI system that performs classification locally.

This article compares both across six technical dimensions — with 3-year TCO considerations for 10, 50, and 100

units.

Waste monitoring is used as the reference scenario, but the same trade-offs apply to

many vision AI

deployments.

The Architectural Decision You’re Actually Making

Both approaches use a camera and a trained vision model. The difference is where the model runs. In an IP camera + Cloud Vision setup, the camera streams or snapshots images to a cloud API that returns classification results. In an edge AI camera setup, the model runs on a dedicated NPU inside the camera itself — no cloud round-trip, no per-call fee, no connectivity dependency for inference.

That architectural difference propagates across every dimension that matters for a production deployment: latency, cost structure, offline resilience, data privacy, and model flexibility. Neither architecture is universally correct — the right choice depends on your specific project constraints. This article works through each dimension honestly.

<50ms

NE301 on-device inference latency (Neural-ART NPU, no network required)

200ms–2s

Typical cloud Vision API round-trip including network and processing time

$0

Per-inference cost on NE301 after hardware purchase — no recurring API fees

How Each Architecture Works

Before comparing dimensions, it’s worth being precise about what each data path actually looks like — because the differences downstream all trace back to this.

IP Camera + Cloud Vision API

Cloud-dependent

1

IP Camera captures RTSP stream or triggers snapshot at

interval

↓

2

Frame extraction — your server or gateway grabs frame,

resizes, encodes as JPEG

↓

3

Upload to cloud API (AWS Rekognition, Azure Vision, Google

Vision)

+100–800ms network upload

+100–800ms network upload

↓

4

Cloud inference — result returned as JSON

+50–1200ms processing

+50–1200ms processing

↓

5

Backend processes result, triggers alert or routing update

NE301 On-Device Edge AI

On-device

1

NE301 captures frame on schedule or event trigger

↓

2

Neural-ART NPU runs YOLOv8 model locally

<50ms total, no network needed

<50ms total, no network needed

↓

3

Result published via MQTT or HTTP — class label,

confidence, timestamp, optional image URL

↓

4

Backend subscribes to MQTT topic, triggers alert or

routing update

The IP Camera path requires a continuously running intermediate service — something that grabs frames, calls the API, and processes results. This adds operational complexity and a failure point that has nothing to do with the camera or the model. The NE301 path eliminates that intermediary: the camera is the inference unit, and the only outbound traffic is a lightweight JSON payload.

Six Dimensions That Matter for Your Deployment Decision

1. Inference Latency

For most bin monitoring scenarios — 4–10 captures per day — raw inference latency is not the critical constraint. Where it matters is in real-time alerting: if a bin overflows at 9am and the next scheduled check is at noon, neither architecture catches it faster than the capture interval. Latency only becomes operationally significant when you need near-real-time response, such as monitoring high-traffic bins at minutes-level intervals.

At that frequency, the NE301’s sub-50ms on-device inference gives you deterministic response time regardless of network conditions. A cloud API round-trip introduces 200ms–2s of variable latency that depends on your upload bandwidth, API service health, and geographic distance to the cloud region. At scale, that variance compounds.

2. Connectivity and Offline Resilience

This is the dimension where the architectural difference is most consequential for outdoor deployments. An IP camera + Cloud API system cannot classify without internet connectivity. If the cellular signal drops, the WiFi AP goes down, or the cloud service has an outage, your monitoring stops. For bins in parks, construction sites, underground car parks, or rural facilities, connectivity is intermittent by design — not by exception.

The NE301 classifies entirely on-device. Connectivity is only needed to publish the result. If the network is down, the NE301 can buffer results locally and publish when connectivity resumes — classification continues uninterrupted. This is a fundamental architectural advantage for any outdoor or infrastructure deployment.

3. Data Privacy and Image Transmission

The IP Camera + Cloud Vision path requires the raw image to leave the device and travel to a third-party cloud service. For public space deployments, this raises GDPR, CCPA, and local privacy regulation questions that require legal review and may require explicit data processing agreements with the cloud vendor.

The NE301 processes images entirely on-device. Raw images never leave the camera unless your

backend explicitly requests them via the image_url field in the MQTT payload. The classification

result — a label and confidence score — is structurally non-identifiable. For public-space bin monitoring, this

eliminates the privacy compliance overhead associated with cloud image transmission.

4. Model Flexibility and Custom Classes

Cloud Vision APIs (AWS Rekognition, Azure Computer Vision, Google Vision) provide general-purpose pre-trained models. Their classification categories are defined by the vendor. If your waste monitoring project requires classes the API doesn’t cover — contaminated waste, specific industrial materials, multi-stream recycling categories — you either use a custom model endpoint (which requires training on the vendor’s platform and incurs additional costs) or you approximate with available labels.

The NE301 runs a fully open model pipeline. You define the classes, train on your own dataset using YOLOv8, export to INT8 quantised TFLite format, and deploy via the Web UI or OTA. No vendor dependency on model definition, no proprietary training platform, no per-model hosting fee. The model is yours — you own it, retrain it, and push updates across your fleet independently.

5. Power and Outdoor Deployment

IP cameras require continuous power and typically need mains or PoE infrastructure. Running a live RTSP stream for frame extraction also means the camera is active continuously — even when nothing is being classified. That power profile makes battery or solar deployment impractical at any meaningful inference frequency.

The NE301 is engineered for deep-sleep operation. In event-triggered or scheduled capture mode, the device draws 6.1µA in standby — waking only to capture, infer, and transmit. Based on CamThink’s measured power data, a standard 4×AA battery configuration (approximately 1,750 mAh) delivers:

| Capture Frequency | Mode | Daily Power (mAh) | Battery Life |

|---|---|---|---|

| 1× / day | WiFi | 0.36 | 13.3 years |

| 4× / day | WiFi | 0.71 | 6.7 years |

| 10× / day | WiFi | 2.29 | 2.1 years |

| 4× / day | LTE Cat-1 (Global) | 1.28 | 3.7 years |

| 10× / day | LTE Cat-1 (Global) | 4.42 | 1.1 years |

Source: NE301 Battery Life documentation. Lab-measured values; real-world results vary by temperature and signal conditions.

An IP camera running continuous RTSP streaming consumes approximately 3–8W depending on resolution and compression. At that power draw, meaningful battery operation is simply not viable. The NE301’s architecture makes outdoor battery-powered deployments at monitoring frequencies practical to 4 years+ without infrastructure.

6. Integration Architecture

The IP Camera + Cloud Vision path requires you to build and maintain a frame extraction service: something that subscribes to the RTSP stream, pulls frames at the right interval, calls the cloud API, handles errors and retries, and feeds results to your backend. That service is your responsibility — it adds DevOps overhead, another failure point, and an integration layer you need to version and maintain.

The NE301 publishes results directly to an MQTT broker or via HTTP POST. Your backend

subscribes to the topic and receives structured JSON. No intermediate service required. The NE301 itself handles

capture scheduling, inference, result formatting, and connectivity management. The integration surface is the

MQTT topic and payload schema — documented in the MQTT Data Interaction guide.

TCO Calculator: 3-Year Cost Comparison

Total cost of ownership over three years depends on four variables: number of units, inference frequency, cloud API pricing, and SIM data costs for cellular deployments. Use the calculator below to compare both approaches for your specific project parameters.

3-Year TCO Calculator

Adjust inputs to match your deploymentIP Camera + Cloud Vision API

—

—

NE301 On-Device Edge AI

—

—

NE301 hardware cost: $199.90/unit (WiFi) or $258.00/unit (LTE). IP camera cost is user-defined above. Infrastructure costs (MQTT broker, frame extraction service) not included — these favour the NE301 path (no intermediate service required). Cloud API pricing based on publicly available 2025 rates; volume discounts may apply. Battery life data source: CamThink Wiki.

📊 What the Default Numbers Show

At the default settings (50 units, 4×/day, Azure Vision pricing, WiFi): NE301 fleet costs approximately $10,000 in hardware with zero recurring inference cost. The IP Camera + Cloud path at $120/unit hardware + $1.50/1000 API calls generates approximately 109,500 API calls over 3 years — adding ~$164 in API costs to the hardware spend. At this frequency and scale, hardware cost dominates and the NE301 hardware premium is offset within the first year by zero recurring cost. At higher frequencies (10×+/day, 100+ units), the API cost gap widens significantly.

When Each Architecture Is the Right Choice

Neither approach is universally superior. Here is an honest assessment of which fits which scenario — including cases where cloud Vision API is the pragmatic choice.

Scenario

IP Cam + Cloud

NE301 Edge AI

Outdoor bins, no mains power

Parks, streets, remote sites

✗ Not viable

Continuous power required

Continuous power required

✓ Purpose-built

2–13 yr battery life

2–13 yr battery life

Intermittent connectivity

Underground, rural, weak signal

✗ Fails without internet

✓ Classifies offline

Buffers, publishes on reconnect

Buffers, publishes on reconnect

Custom waste classes

Industrial, multi-stream recycling, contamination detection

△ Custom endpoint needed

Vendor platform + added cost

Vendor platform + added cost

✓ Fully open pipeline

YOLOv8 + INT8 quantisation

YOLOv8 + INT8 quantisation

High-frequency monitoring

10+ captures/day, near real-time alerts

✗ API cost scales linearly

+ latency variance

+ latency variance

✓ Zero marginal cost

Fixed hardware, no per-call fee

Fixed hardware, no per-call fee

Privacy-sensitive public space

GDPR / CCPA regions, municipal contracts

✗ Raw images leave device

DPA with cloud vendor required

DPA with cloud vendor required

✓ Images stay on-device

Only result label transmitted

Only result label transmitted

Low-frequency PoC, indoor, WiFi

1–3 captures/day, temporary pilot, mains power available

✓ Reasonable choice

Low API cost, uses existing camera

Low API cost, uses existing camera

✓ Also viable

Higher upfront, zero recurring

Higher upfront, zero recurring

Existing IP camera infrastructure

Client already has cameras, adding AI layer

✓ Add-on approach

Reuse hardware investment

Reuse hardware investment

△ Replace vs. augment

NG4500 can process IP cam feeds

NG4500 can process IP cam feeds

Existing IP Camera Infrastructure

If your client already has IP cameras installed and wants to add AI classification without replacing hardware, the NeoEdge NG4500 (NVIDIA Jetson Orin, up to 157 TOPS) can ingest multiple IP camera feeds and run inference locally — giving you on-device AI without replacing the camera fleet. This is the correct path when the constraint is "use existing cameras," not "buy new hardware."

Decision Tree: Which Architecture for Your Project

Answer these four questions to reach a recommendation:

Q1 — Does the deployment site have reliable mains power at every bin location?

Q2 — Is internet connectivity reliable and always-on at every bin location?

Q3 — Do you need custom classification classes beyond what standard cloud Vision APIs

provide?

Q4 — Is the deployment high-frequency (6+ captures/day) or privacy-sensitive (public

space, GDPR region)?

NE301 On-Device AI

Outdoor · offline-resilient · custom model · high-frequency · privacy

IP Camera + Cloud Vision

Indoor PoC · existing camera infra · low-frequency · standard classes

CamThink Hardware for Each Architecture Path

NeoEyes NE301

On-Device AI · Replaces IP Camera + Cloud

Self-contained edge AI — inference, connectivity, and MQTT publishing in one unit

- STM32N6 + Neural-ART NPU · 0.6 TOPS

- YOLOv8 on-device · <50ms inference

- 4MP · 51°/88°/137° FOV

- IP67 · -20℃ to 50℃

- 6.1µA deep sleep · PoE, USB, or battery

- WiFi 6 + BT 5.4 · optional LTE 4G / PoE

- MQTT + HTTP native · open firmware

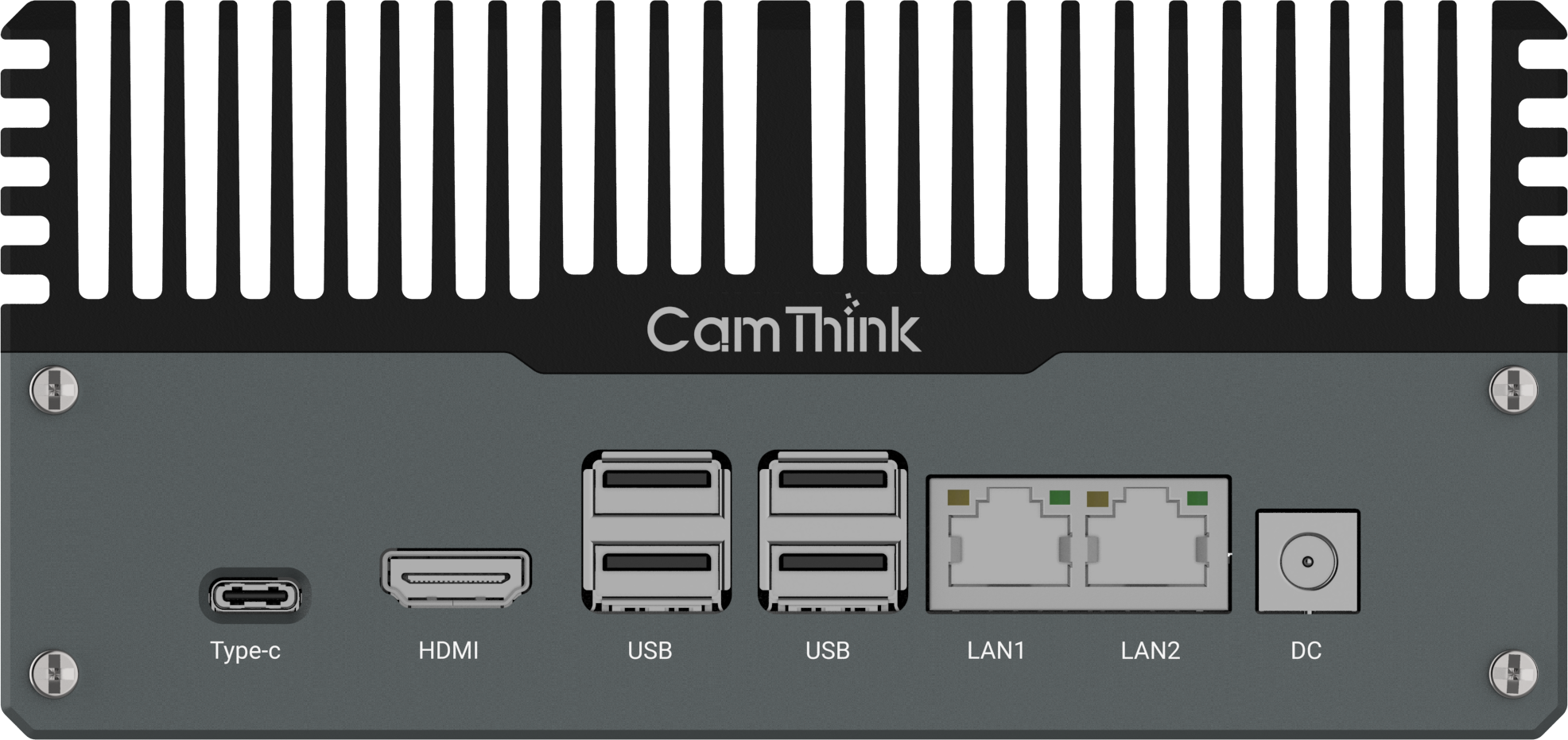

NeoEdge NG4500

Edge Server · Processes Existing IP Camera Feeds

When you need to keep existing IP cameras and move inference off the cloud

- NVIDIA Jetson Orin NX/Nano · up to 157 TOPS

- Ingests multiple IP camera feeds locally

- Runs inference on-premise — no cloud required

- IP67 · -20℃ to 60℃

- JetPack 6.0+ · VLM / LLM support

- CAN · RS232 · RS485 · multi I/O

- Fanless · 12–36V DC

Full Comparison Table

| Dimension | IP Camera + Cloud Vision API | NE301 On-Device Edge AI |

|---|---|---|

| Inference location | Cloud (third-party servers) | On-device Neural-ART NPU |

| Inference latency | 200ms–2s (network + processing) | <50ms (no network needed) |

| Internet required for inference | Yes — always | No — classifies offline |

| Per-inference cost | $0.50–$1.50 per 1,000 calls | $0 — hardware is one-time |

| Raw image leaves device | Yes — uploaded to cloud | No — stays on-device by default |

| Custom model classes | Vendor platform + added cost | Fully open · YOLOv8 native |

| Battery / outdoor deployment | Requires continuous mains power | 6.1µA sleep · 2–13 yr battery |

| Integration architecture | Camera + frame-extraction service + cloud API + backend | Camera → MQTT/HTTP → backend |

| Infrastructure complexity | Frame extraction service required | No intermediate service needed |

| Model ownership | Vendor-controlled | Fully owned · OTA updatable |

| Best fit | Indoor PoC · existing camera infra · standard classes · low frequency | Outdoor · offline · custom model · high-frequency · privacy-sensitive |

FAQ

Can the NE301 work with an existing IP camera network instead of replacing it?

Not directly — the NE301 is a standalone camera with a built-in NPU, not a processing unit for external

camera streams. If you need to add on-device AI inference to an existing IP camera network without replacing

the cameras, the NeoEdge NG4500 (Jetson Orin, up to 157 TOPS) is the correct product: it

ingests RTSP feeds from multiple IP cameras and runs inference locally, eliminating the cloud dependency

while preserving the existing camera infrastructure.

How does the NE301 handle connectivity outages during deployment?

The NE301 classifies on-device regardless of network state — inference does not depend on connectivity. If

the MQTT broker is unreachable, the device can buffer results locally and publish when the connection is

restored. The classification schedule continues uninterrupted. This is a fundamental architectural advantage

over cloud Vision systems, where connectivity loss means a complete halt to monitoring.

What cloud Vision APIs can realistically be compared to the NE301 approach?

The most commonly used general-purpose Vision APIs for object/scene classification are AWS Rekognition

(DetectLabels), Azure Computer Vision, and Google Cloud Vision API. All three charge per API call —

typically $1.00–$1.50 per 1,000 calls at standard volume. Custom model endpoints (AWS Rekognition Custom

Labels, Azure Custom Vision) incur additional training and hosting costs on top of inference costs. For a

waste bin monitoring use case requiring custom fill-level classes, all three cloud providers would require a

custom model configuration — adding significant setup cost and vendor dependency that the NE301's open

YOLOv8 pipeline avoids.

Does the NE301 require a GPU server for model training?

Training a YOLOv8 classification model requires a machine with reasonable compute — an NVIDIA GPU server is

recommended for training speed, but the CamThink AI Tool Stack also runs on a Mac with Apple Silicon

(M-series chips). A model for waste bin fill-level classification (6 classes, ~1,500 images) typically

trains in 15–40 minutes on a mid-range GPU. The quantisation step (INT8 export for the NE301 NPU) runs in

under 5 minutes on the same machine. You do not need a cloud-hosted training environment unless you prefer

it. See the AI Tool Stack documentation for environment requirements.

What is the NE301's inference accuracy compared to cloud Vision APIs on waste classification?

Cloud Vision APIs perform well on general object categories but are not trained on waste bin fill-level

classes — you would need a custom model regardless. When comparing a custom-trained model on NE301 against a

custom model endpoint on a cloud provider, accuracy is primarily determined by training dataset quality and

class balance, not the inference platform. In CamThink's field validation with a YOLOv8 model trained on

waste bin images, the NE301 achieved over 90% classification accuracy on the 6-class fill-level model. The

difference between cloud and edge at the same model architecture is negligible; the meaningful accuracy

variable is your training data.

Is there a scenario where the cloud Vision API approach is genuinely better?

Yes — honestly. If your project is a short-duration indoor PoC (2–4 weeks), your client already has a stable

WiFi network and mains-powered IP cameras, you need standard object classes that the API covers out of the

box, and inference frequency is low (1–3 times per day), the cloud Vision API approach has lower upfront

cost and faster time-to-first-demo. The NE301's advantages — battery life, offline operation, zero recurring

cost, open model pipeline — become decisive at production scale, outdoor environments, or when

classification frequency or privacy requirements make cloud dependency untenable.

🛠 Technical Resources

NE301 Quick Start

First working deployment in under 2 hours

MQTT Integration Guide

Payload schema, topic structure, broker config

AI Tool Stack

Dataset → YOLOv8 training → INT8 export → deploy

Battery Life Calculator

Precise power data for all frequencies and modes

GitHub — CamThink-AI

Open firmware, model examples, MQTT samples

Discord Community

Ask integration questions, share builds